In the tech world, there is a common belief that the higher the algorithm's accuracy, the better. However, from a business perspective, chasing "fractions of a percent" in image recognition precision can be the shortest path to burning through your budget.

As a Business Owner, you face a dilemma: should you choose the most accurate solution or the fastest one? The answer is: choose the one that best scales your profit.

1. The Currency of Milliseconds: Why Speed Matters

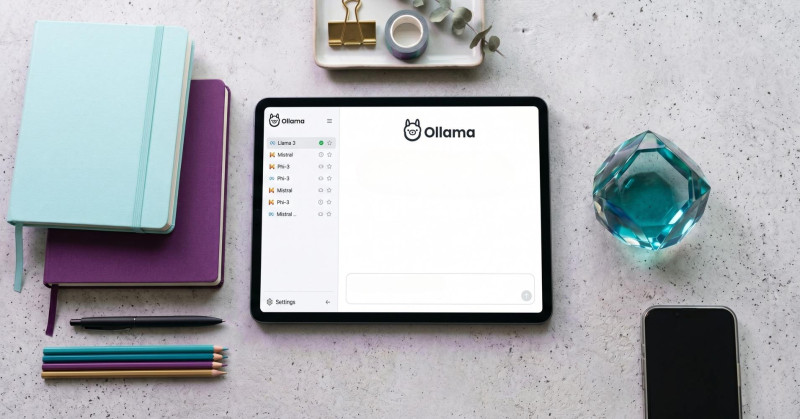

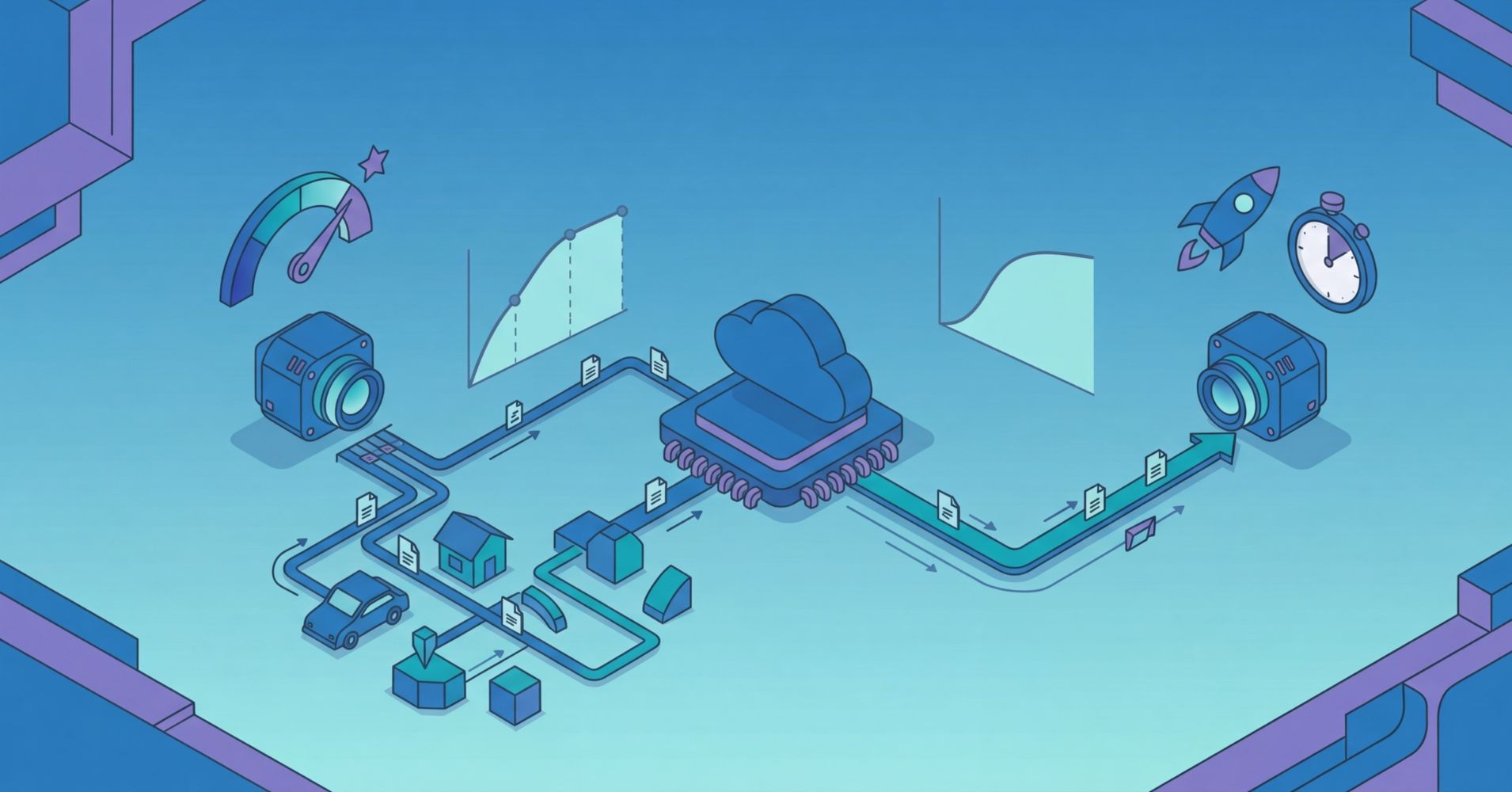

In Computer Vision, time isn’t just a convenience—it’s your business’s throughput. If you are deploying a video analysis system on a production line that churns out 10 products per second, your model must make a decision in less than 100 ms.

YOLO-type models (You Only Look Once): These are the "sprinters" of the AI world. They are optimized for real-time performance. They allow for image analysis directly on inexpensive devices (Edge Computing), eliminating the costs of sending gigabytes of data to the cloud.

Applications: Logistics, crowd counting, security systems, high-speed quality control.

2. The Cost of Precision: When is 99% Too Much?

Imagine two scenarios. In the first, a system counts vacant parking spaces. If it misses one car (98% accuracy), nothing happens. In the second, an algorithm analyzes X-rays for cancer. Here, a 2% error rate is a catastrophe.

Ultra-high precision models, such as Vision Transformers (ViT), require massive computational power.

High Precision = Expensive Infrastructure: Running the most accurate models requires servers with powerful GPUs. The cost of maintaining such a system in the cloud can swallow the margin generated by the innovation itself.

The Golden Rule: Look for the Minimum Viable Accuracy (MVA) point. This is the lowest level of accuracy that solves your business problem while maintaining the lowest operational costs.

3. Cloud vs. Edge: Where Does the Heart of Your System Beat?

The choice between a fast or an accurate model determines your IT architecture and your future invoices.

Feature | Fast Models (Edge) | Heavy Models (Cloud) |

Operational Cost | Low (one-time hardware purchase) | High (monthly power subscription) |

Latency | Minimal (local processing) | Dependent on internet speed |

Data Privacy | High (data never leaves the site) | Lower (data travels through the web) |

Scalability | Easy (add another device) | Costly (requires more cloud power) |

4. Three Questions You Must Ask Your IT Team

Before you sign off on a budget for Computer Vision implementation, make sure your team isn't "using a sledgehammer to crack a nut." Ask them:

What is the cost per inference (operation)? How much does it actually cost us to analyze one image or one minute of footage?

Where is the bottleneck? Is it the processor, or perhaps the data transfer speed that limits us?

Can we use quantization? This is a technique that "slims down" a model so it runs 3x faster on cheaper hardware with a loss of only about 1% in accuracy.