AI-assisted code review has become not only possible but essentially indispensable in rapid development cycles. Today's language models are no longer limited to simple syntax checking. They are capable of analyzing code at several advanced levels. We have prepared a guide on how to perform code review using Claude AI – one of the most advanced and recommended tools for developers. Enjoy the read!

Can you perform code review using AI?

The short answer is: yes, you can, and you even should! Artificial Intelligence is advanced enough to assist developers in various aspects.

Precise Logic Bug Detection

AI easily identifies subtle mistakes, such as off-by-one errors, memory leaks, or improper exception handling, which often escape the tired human eye during manual reviews of hundreds of lines of code.

Security and Data Protection

Modern algorithms act as automated auditors, scanning every change for critical vulnerabilities like SQL Injection or Cross-Site Scripting (XSS).

Sensitive Data Leak Prevention

AI serves as an effective barrier against data leaks, instantly blocking attempts to push API keys or passwords hidden in configuration files to the repository. This ensures that application security (AppSec) is monitored in real-time, even before the production deployment stage.

Full Repository Context

What sets advanced tools like Claude Code apart is their ability to understand the full context of the repository. Unlike traditional linters, AI does not analyze only the changed lines (the so-called diff). It can understand the architecture of the entire system and predict how a modification in one module will affect dependencies in other parts of the application.

Style Guide Adherence

AI rigorously enforces standards and style, ensuring every line is consistent with internal company guidelines, which guarantees high readability and long-term maintainability.

What exactly does AI do?

Unlike traditional static code analysis tools (like SonarQube), AI understands the developer's intent.

Detecting "Logical Holes"

AI can notice that even if the code is syntactically correct, a function like calculateDiscount might not account for a case where the cart is empty, potentially leading to a DivisionByZero error.

Context-Aware Review

Modern models (Claude 3.5/4) analyze the entire repository, not just the changed file. They know that changing a data type in User.id in the database requires updates in 15 other microservices.

"On-the-fly" Test Generation

During a review, AI often suggests: "You've written this function, but it's missing a test for negative values. Here is the ready-to-use test code in Jest".

The impact of AI code review on speed and efficiency

Implementing AI into the code review process in 2026 has ceased to be an experiment and has become a hard business necessity. Industry reports, including the latest data from GitHub and State of DevOps, indicate a drastic improvement in key performance indicators (KPIs) for development teams.

First Response Time: While a traditional review by another developer takes an average of 4 to 24 hours, tools like Claude Code or Copilot provide feedback in less than 5 minutes. This is nearly 95% time savings at the very start of the process.

Cycle Time Reduction: Because AI catches syntax errors, security gaps, and style inconsistencies "on the fly," the number of required iterations drops from the typical 3–5 to just one or two. This translates to a 50% reduction in production cycle time.

Bug Detection Accuracy: The precision of algorithms allows for increasing the detection of critical bugs from 60% to as high as 90%, which drastically lowers the cost of maintaining technical debt later on.

The Human Dimension: Automating repetitive review elements eliminates the frustration associated with long waits for feedback, significantly raising overall developer satisfaction. Instead of wasting energy on "ping-pong" matches over semicolons and formatting, developers can focus on what matters most – architecture and delivering real business value.

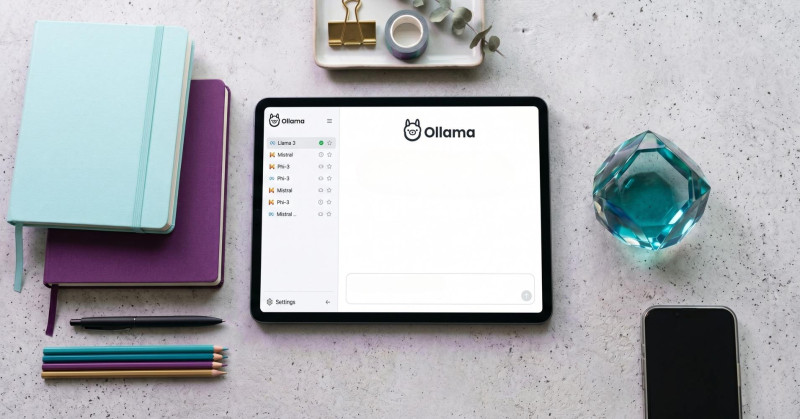

How does Claude Code Review work?

Claude Code offers a Code Review feature available as a terminal tool or a GitHub integration (in the Research Preview version for teams).

Multi-stage Analysis

Claude doesn't just throw out an "opinion" immediately. It first examines the project structure, reads definition files (e.g., CLAUDE.md), and then launches agents that check correctness, security, and architecture.

Inline Comments

If you use the GitHub integration, Claude leaves comments directly in your Pull Request (PR) on the exact lines that require improvement.

Change Verification

It can not only point out an error but also suggest ready-to-paste code ("one-click fix").

How to correctly perform code review with Claude AI?

To get the most out of Claude and avoid "noise" (unnecessary comments), follow these rules:

A. Create a CLAUDE.md file: This is the most important step. Claude Code reads this file before every analysis. It should contain naming standards, preferred libraries (e.g., "we use axios instead of fetch"), and architecture guidelines (e.g., "business logic always in the /services folder").

B. Prepare the context (Prompting): If performing a review manually in chat or via CLI, do not send just the bare code. Use a template like: "Review this Pull Request. Focus on: 1. Async logic correctness, 2. Absence of regressions in the auth module, 3. Compliance with our unit tests".

C. The "Explore -> Plan -> Review" process: Instead of asking for a quick check, force Claude into the following process:

Explore: Let AI scan files related to the change.

Plan: Let it describe its understanding of the introduced changes.

Verify: Only after your confirmation should it proceed to the actual review and bug checking.

D. The "AI proposes, human approves" rule: Never allow AI to automatically merge code. Claude can be wrong, especially in specific business logic. Always treat its remarks as suggestions, not an ultimate verdict. Remember halucinations; AI can still make mistakes or suggest an elegant but incompatible fix for a niche library you are using.

What are the risks associated with AI code review?

The biggest mistake in implementing AI is not a lack of trust, but blind trust. The key to success is treating AI as a powerful linter with an advisory function, not as a standalone decision-maker.

"Illusion of Correctness" and skill atrophy

This is the most common threat. AI generates comments that sound professional even when they are wrong. Developers might start reflexively clicking "Approve" or "Apply fix," leading to a loss of system understanding.

How to avoid: Apply the Human-in-the-loop principle. AI should be treated like a junior developer – its suggestions require human verification.

Lack of business logic understanding (Context Gap)

AI handles syntax and patterns well but rarely understands why a function exists in your business context.

Risk: AI might suggest a "code optimization" that is technically correct but breaks specific business or legal rules (e.g., regarding medical or financial data).

How to avoid: Use configuration files like CLAUDE.md to "feed" AI with business and architectural context. Never entrust critical modules (e.g., payment systems) to AI without expert oversight.

"Hallucinating" dependencies and libraries

AI models tend to suggest functions or libraries that sound logical but do not exist.

Risk: Introducing non-existent packages opens the door to Typosquatting attacks.

How to avoid: Always verify suggested imports. Use tools like Snyk or Socket to check the authenticity of libraries.

Data Leakage and Privacy: Sending code for analysis risks confidential information ending up in AI training sets.

How to avoid: Use Enterprise versions of tools (e.g., Claude for Enterprise), which guarantee your code is not used for training. Implement secret scanners (e.g., TruffleHog) to block sensitive data from being sent to AI.

"AI Brain Fry" (Information Overload)

AI agents can generate hundreds of comments on one PR, focusing on insignificant details.

Risk: Developers experience decision fatigue and start ignoring critical errors among trivial "nit-picks" about formatting.

How to avoid: Calibrate the severity level. Set filters so AI reports only "Medium" and "High" priority errors.

Technical FAQ: Claude AI Code Review

How to install and run Claude Code for local review? Claude Code is available as a CLI tool. Typically, you install the package via a manager (e.g., npm) and initialize it in the project's root folder. It will scan the structure to understand the architecture before analysis.

Do I need to instruct Claude about my standards every time? No, that is what the CLAUDE.md file is for. It is the most important configuration step.

How to integrate Claude with the GitHub PR process? Claude offers cloud agent integration. Once configured, it leaves inline comments and can suggest "one-click fixes".

What if Claude suggests a wrong solution? This is a hallucination. Always apply the Human-in-the-loop principle – the developer must verify every suggestion.

Is my code safe? Large organizations should use Claude for Enterprise to ensure data privacy and that code is not used for training.

How to avoid too many insignificant notes (nit-picks)? Calibrate the model's severity level, setting filters for "Medium" and "High" priority errors. Leave purely stylistic issues to dedicated linters like Prettier or ESLint.